MULTI-SCALE MOVEMENT TECHNOLOGIES

ACM ICMI 2020

INTERNATIONAL WORKSHOP

Utrecht, the Netherlands, October 29, 2020

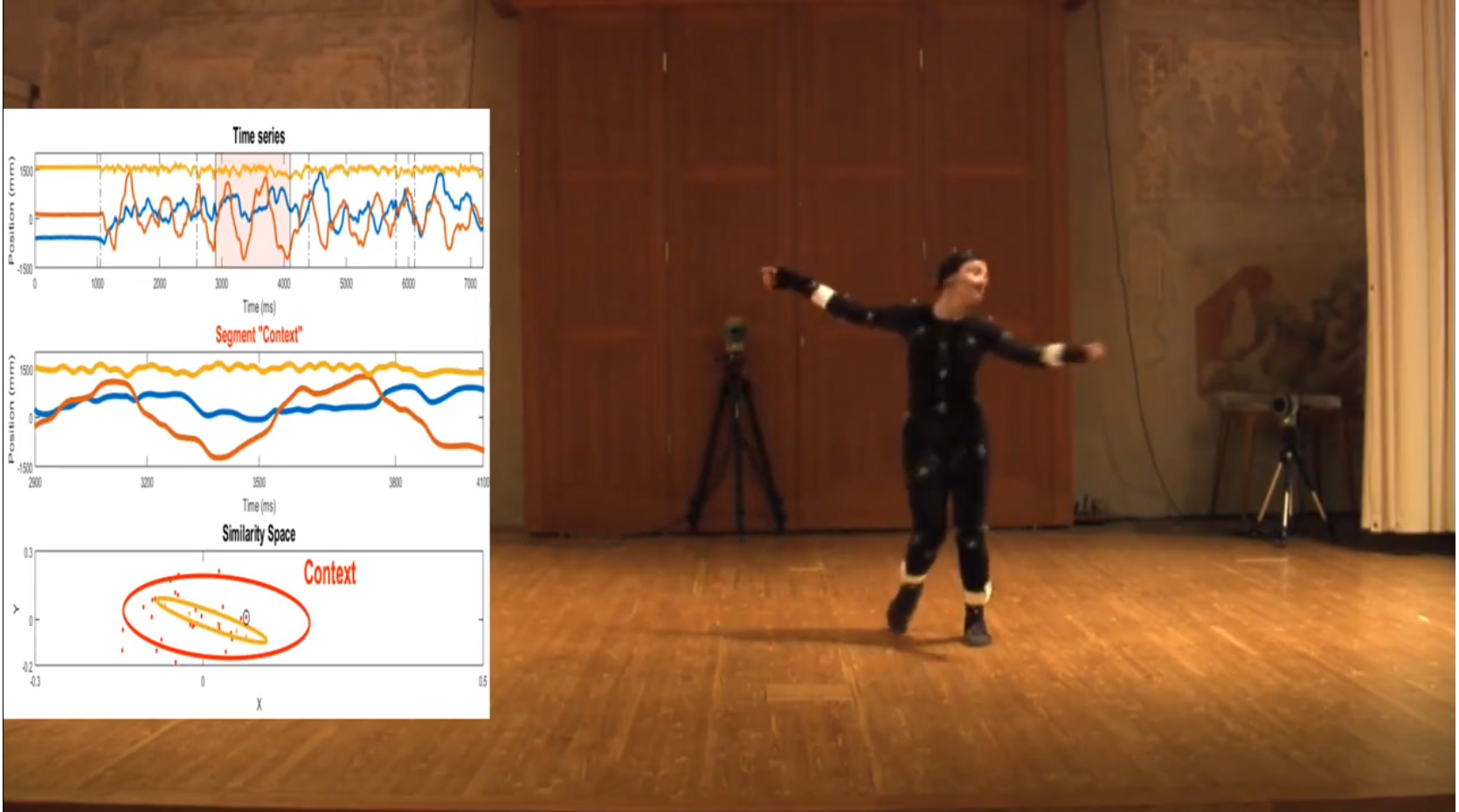

Reckoning with mutually interactive time scales characterizing human behavior is now a major multidisciplinary challenge for qualitative analysis of human movement, entrainment and prediction .Innovative scientifically-grounded and time-adaptive technologies operating at multiple time scales in a multi-layered approach are a promising direction for multimodal interfaces.

This workshop aims at stimulating submissions on novel computational models and systems for the automated detection, measurement, and prediction of movement qualities from behavioural signals, based on multi-layer parallel processes at non-linearly stratified temporal dimensions.

Furthermore, the workshop invites contributions towards novel technologies for human movement analysis, going beyond the well-known motion capture paradigm . Future motion capture and movement analysis systems will be endowed with a completely new functionality, achieving a novel generation of time-aware multisensory motion perception and prediction systems.

Contributions from computational models, multimodal systems, experiments on the above mentioned core topics, as well as application scenarios,including e.g., healing, therapy and rehabilitation, entertainment,performing arts (music, dance) and active experience of multimedia cultural content, are welcome.

The workshop is partially supported by the EU-H2020-FET Proactive Project GA824160 EnTimeMent. For this, the workshop will build on the results of the first year of EnTimeMent, while also being open to new perspectives and experiences.

© 2019-2020 EnTimeMent Project

EnTimeMent has received funding from the European Union's Horizon 2019-2022 Research and Innovation Programme under Grant Agreement No. 824160